January 2026 Update

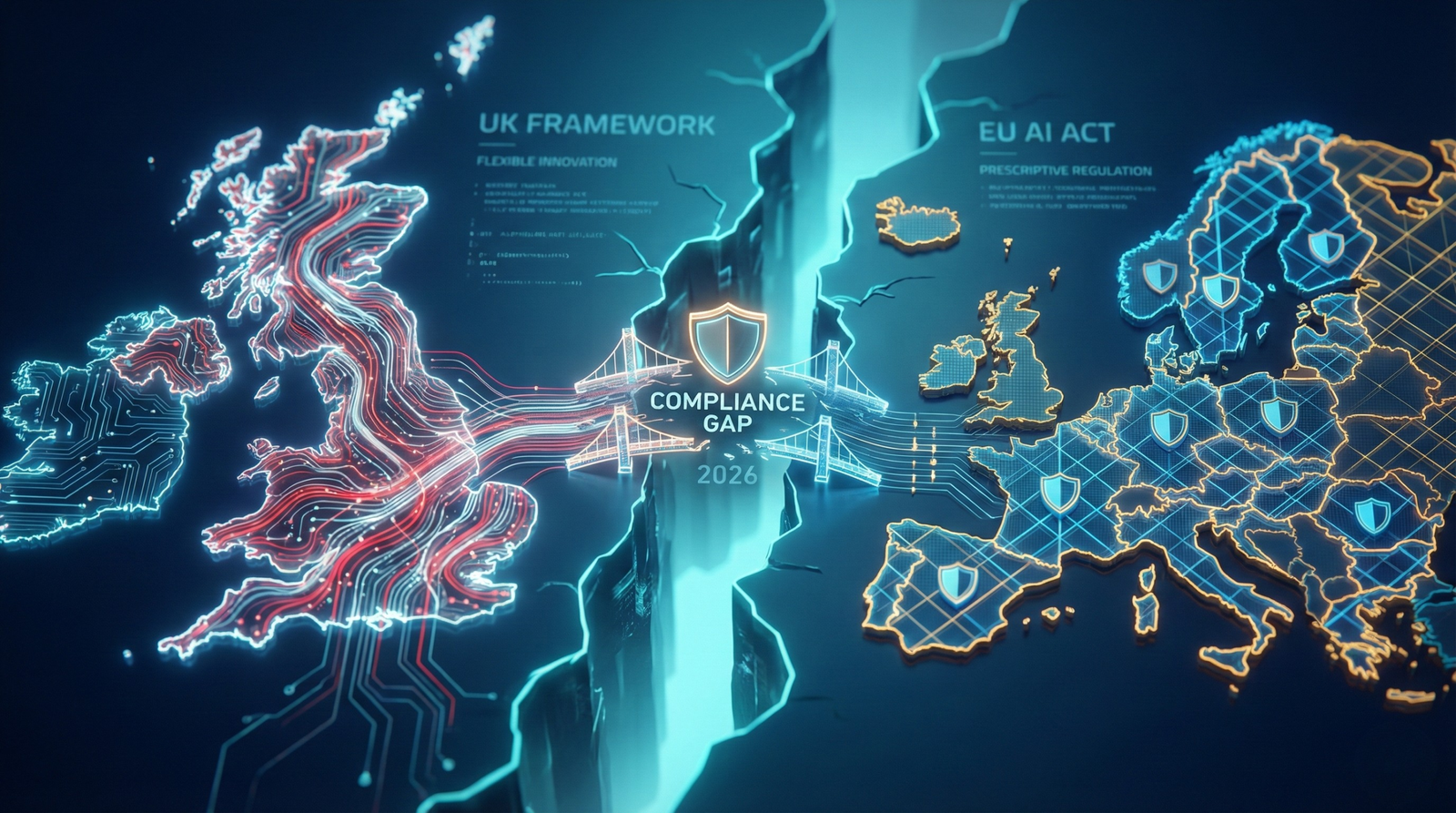

The UK EU AI compliance gap 2026 is now a critical reality for British enterprise as the initial grace periods for the EU AI Act expire. As of this month, the initial grace periods for the European Union’s Artificial Intelligence Act (EU AI Act) have expired for General Purpose AI models, and the final countdown for High-Risk systems has begun. For UK-based enterprises, the theoretical debates of 2024 and 2025 regarding sovereignty and regulatory divergence have solidified into an immediate operational reality.

While the United Kingdom continues to pursue a distinct, pro-innovation regulatory framework—eschewing a single “UK AI Act” in favour of a sector-led model—British businesses are finding themselves in a complex bind. The geographical border may be clear, but the digital border is permeable. For any UK enterprise with customers, data subjects, or supply chains touching the European Union, the “Brussels Effect” has taken hold.

The central challenge for UK Chief Information Officers (CIOs) and Chief Risk Officers (CROs) in 2026 is no longer simply about adopting Generative AI; it is about navigating the friction between two diverging governance regimes. On one side sits the EU’s prescriptive, horizontal legislation with tiered risk categories and mandatory conformity assessments. On the other lies the UK’s flexible, outcome-focused reliance on existing regulators such as the ICO, FCA, and CMA.

This article examines why, for many mid-to-large UK enterprises, the path of least resistance—and greatest commercial safety—may paradoxically involve aligning with EU standards even for domestic operations. It explores where the rules diverge, the financial implications of dual compliance, and how enterprise leadership should structure governance. Understanding this divide is the first step in managing the UK EU AI compliance gap 2026.

The UK EU AI Compliance Gap 2026: Flexibility vs. Prescriptiveness

The philosophical split between London and Brussels has created two distinct operating environments. Understanding this divide is critical for enterprise strategy.

The EU’s Centralised “Hard Law” Model

The EU AI Act is now enforceable for prohibited practices (banned since February 2025) and General Purpose AI (since August 2025). The industry is now bracing for the full application of rules for “High-Risk” systems in August 2026.

The Act functions as horizontal product-safety legislation, comparable to CE marking for physical goods. If an AI system falls into a “High-Risk” category—such as those used in employment decisions, creditworthiness, biometric identification, or critical infrastructure—it must undergo formal conformity assessments before being placed on the EU market.

For UK enterprises, the extraterritorial scope of the Act is decisive. A London-based firm processing EU data or offering AI-enabled services to EU users remains fully liable. Enforcement is coordinated through the EU’s AI Office, reducing interpretive ambiguity and limiting room for regulatory discretion.

The UK’s Sector-Led Framework

By contrast, the UK has avoided introducing a single, overarching AI statute. Instead, it relies on existing regulators—the ICO, FCA, CMA, and sector-specific bodies—operating under shared principles: safety and robustness, transparency and explainability, fairness, accountability, and contestability.

For enterprises, this approach offers flexibility and speed. A fintech deploying an AI-driven customer service tool, for example, must satisfy the FCA on consumer outcomes and the ICO on data processing, rather than seeking approval from a central AI authority. However, this flexibility comes at the cost of fragmentation. Compliance is often guidance-led rather than statute-driven, and responsibility for interpretation sits firmly with the organisation.

The Brussels Effect on UK Enterprises

Despite the UK’s intention to encourage agility, the economic gravity of the EU Single Market is exerting significant pressure on British firms. The “Brussels Effect” describes how companies voluntarily adopt the strictest regulatory standard globally to reduce operational complexity.

The “One-Product” Strategy

For many UK enterprises, maintaining separate AI systems—one aligned with EU requirements and another optimised for the UK—is commercially inefficient. Forked codebases, duplicated documentation, and parallel risk processes introduce technical debt that often outweighs the benefits of regulatory flexibility.

As a result, a growing number of organisations are treating the EU AI Act’s impact on UK business as a baseline rather than an external constraint. If an AI system must meet EU transparency and audit requirements to operate in France or Germany, deploying the same standard domestically simplifies governance and reduces long-term risk.

Supply Chain Pressure

This alignment extends beyond exporters. UK firms with no direct EU presence increasingly encounter cross-border AI compliance expectations through procurement and partnership agreements. Multinational clients are standardising vendor requirements to minimise third-party risk, often insisting on EU-equivalent governance regardless of jurisdiction. In effect, EU standards are propagating through private contracts, bypassing national regulatory divergence altogether.

Critical Friction Points Where Rules Diverge

Although alignment is often pragmatic, material differences remain between the UK and EU regimes. These differences require deliberate management.

Transparency and Watermarking

Under Article 50 of the EU AI Act, AI systems that interact with humans or generate synthetic media are subject to mandatory disclosure requirements. Certain outputs, including deepfakes, must be machine-readably watermarked.

The UK approach remains principles-based. Transparency obligations arise primarily through data protection and consumer protection law rather than explicit AI-specific mandates. In practice, this can result in different user experiences: an AI-driven interface that operates seamlessly for UK users may require explicit disclosures for EU users, increasing UX complexity and development overhead.

Data Governance and Training Data

Data governance represents one of the most significant points of divergence. The EU requires high-risk AI systems to be trained on datasets meeting stringent quality and representativeness criteria. For large language models, this standard is particularly demanding.

UK guidance, informed by ICO principles and ongoing data reform discussions, is comparatively pragmatic, provided appropriate safeguards exist. This divergence has led some enterprises to segregate data infrastructure—maintaining a “gold-standard” dataset for EU-facing models and a broader dataset for UK-internal experimentation.

Risk Classification and Assessments

An AI compliance checklist for 2026 looks markedly different across jurisdictions.

- EU: Compliance is category-driven. If a system falls within a high-risk classification, formal impact and conformity assessments are mandatory.

- UK: Compliance is outcome-driven. Regulatory concern arises when demonstrable harm or legal breaches occur.

This procedural gap can create exposure. An internally approved tool in the UK may become non-compliant if accessed by EU users without the requisite EU-style assessments.

Financial Implications of Dual Compliance

The choice between divergence and alignment has direct financial consequences. Compliance is no longer a peripheral legal issue; it is a core component of enterprise IT and risk budgets.

The Cost of the Gap

Enterprises operating across the UK and EU face rising compliance overheads, including duplicated legal advice, parallel audits, and complex geo-segmentation of AI services. Certification by EU-accredited bodies often runs alongside separate UK regulatory engagements, compounding cost and administrative burden.

These pressures directly intersect with enterprise ROI considerations, particularly in regulated sectors. As explored in our [previous report on the ROI of Generative AI in Banking], sustainable value is closely linked to regulatory certainty.

Asymmetric Legal Exposure

The EU AI Act introduces penalties of up to 7% of global annual turnover or €35 million for prohibited practices. UK enforcement mechanisms, while significant, typically allow greater scope for regulatory dialogue and remediation.

This asymmetry drives conservative decision-making. From a board-level risk perspective, avoiding EU-level fines often becomes the dominant priority, shaping governance choices and diverting investment away from experimental use cases that UK policymakers aim to encourage.

Strategic Action Plan for UK CIOs and CROs

In this environment, passive observation is insufficient. Effective governance requires deliberate alignment and internal discipline.

1. Adopt a “High-Water Mark” Governance Model

For most enterprises with any international exposure, aligning internal governance with EU high-risk classifications provides the most robust baseline. Systems capable of meeting EU requirements are unlikely to fall short of UK expectations.

2. Maintain a Comprehensive AI Inventory

Effective governance begins with visibility.

- Audit all AI systems against EU high-risk categories.

- Identify informal or “shadow AI” deployments interacting with EU data or users.

3. Reassess Vendor and Partner Contracts

Third-party risk is a primary source of exposure.

- Require vendors to demonstrate EU-level transparency and documentation.

- Strengthen indemnity provisions related to data provenance and intellectual property.

4. Formalise Human Oversight

Both regimes emphasise accountability, albeit differently.

- Establish documented human-in-the-loop processes with genuine authority to override AI decisions.

- Ensure oversight mechanisms satisfy EU Article 14 requirements while reinforcing UK accountability principles.

Conclusion

As 2026 progresses, the UK’s ambition to remain a flexible, pro-innovation AI environment remains compelling. However, the commercial reality for enterprise is inherently global. Regulatory divergence has created a complex UK EU AI compliance gap 2026 that demands strategic, not reactive, management.

The objective for UK leaders is not to choose between London and Brussels, but to design governance structures resilient enough to satisfy EU scrutiny while retaining the agility encouraged by the UK framework. Organisations that achieve this balance will be positioned to deploy AI safely and at scale, regardless of jurisdiction.

Ultimately, robust governance underpins sustainable value creation. As enterprise experience with AI operations has already shown, confidence—not capability alone—determines success. In 2026, that confidence is built on compliance.