Shadow AI UK business risk is no longer a fringe concern — two-thirds of organisations admit their staff regularly use unapproved AI tools at work.

Shadow AI is no longer an IT problem — it’s a governance problem.

Table of Contents

Shadow AI UK Business: The Short Version

Shadow AI — the use of AI tools that nobody in IT sanctioned or monitors — has become the default behaviour in most UK workplaces. SAP and Oxford Economics research published in February 2026 puts the number at 68% of UK businesses reporting employees who regularly use unapproved AI tools. A separate Microsoft UK Cyber Pulse report from March 2026 found 62% of UK businesses have deployed AI agents — many of them operating in silos, outside any formal governance.

Here’s the uncomfortable part. Only 7% of UK businesses have an enterprise-wide AI strategy (SAP/Oxford Economics, 2026). Sixty per cent of employees haven’t received any comprehensive AI training. Boards are signing off on AI budgets at record speed, but nobody has told staff which tools are approved, which aren’t, and what happens to the customer data they’ve just pasted into a free ChatGPT window.

This isn’t a technology problem. It’s a governance failure. And UK businesses that don’t close the gap are carrying financial, regulatory, and operational risk that grows every week.

Why Shadow AI Is Growing in UK Businesses

The pattern is familiar. Shadow AI is following the same trajectory as shadow IT a decade ago — only faster, and with sharper consequences.

The tools are free and frictionless. Any employee with a browser can access ChatGPT, Gemini, Claude, or a dozen smaller generative AI apps without IT ever knowing about it. BlackFog’s January 2026 survey found 86% of employees now use AI tools weekly for work. A third of them rely on free consumer versions with no enterprise security controls whatsoever.

Employers, meanwhile, haven’t provided alternatives. Microsoft UK’s research found 28% of employees turn to unapproved tools specifically because their company doesn’t offer an approved option. Another 41% say they use what they know from home. Can you blame them?

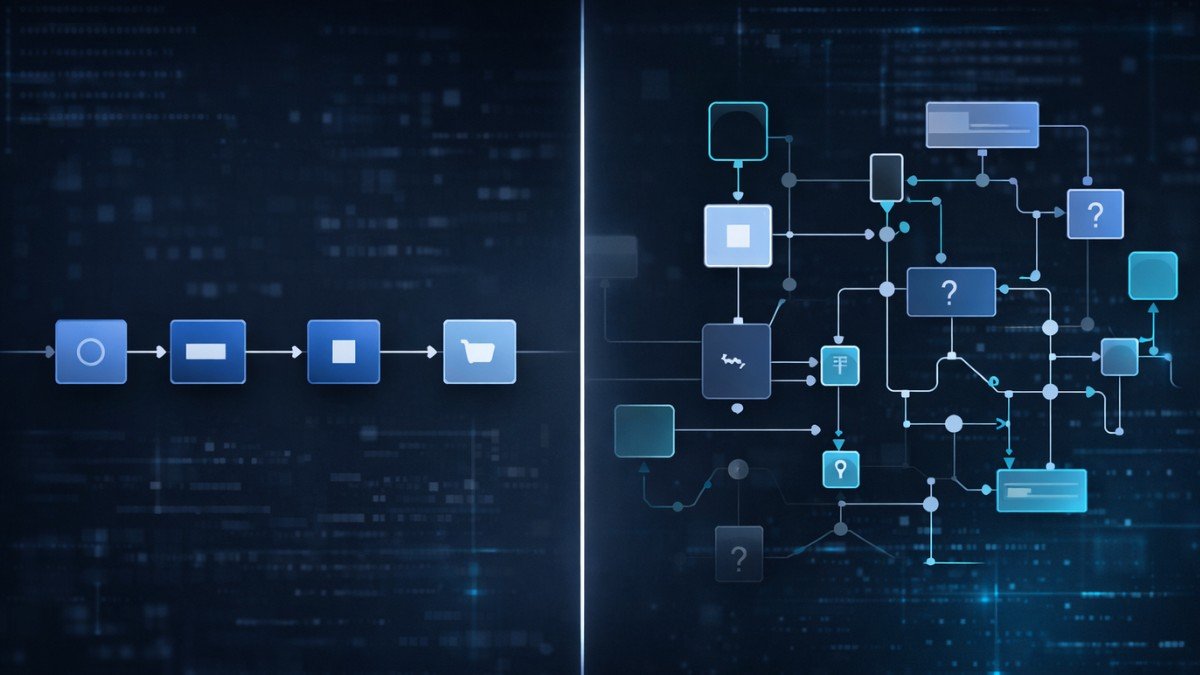

And then there’s the structural mismatch. How UK companies are actually deploying AI in operations reveals a workforce that’s adopted AI faster than the organisations behind them can govern it. Employees aren’t waiting for strategy documents. They’re solving problems now — with whatever’s available.

The Scale of the Problem: Tool Sprawl and Ungoverned AI

This goes well beyond a few people using ChatGPT on their lunch break. It’s systemic.

Salesforce’s 2026 Connectivity Report found UK enterprises now run 796 applications on average. Only 33% of those are integrated with each other. Every unapproved AI tool added to that stack increases the attack surface, fragments data flows, and creates compliance blind spots that no audit can cover after the fact.

It’s also directly feeding why UK enterprises are consolidating their AI tool stacks. CIOs are trying to rationalise their AI estates — but shadow AI keeps adding tools faster than procurement can take them away. Three-quarters of UK IT leaders in the Salesforce survey said they’re worried AI agents will create more complexity than value. That worry looks well-founded.

The financial cost of shadow AI UK business exposure is not theoretical. IBM’s Cost of Data Breach research found 20% of organisations traced a breach directly to shadow AI. Those breaches added an average of £200,000 to total costs — on top of whatever reputational damage followed.

The Compliance Risk of Ungoverned AI

Shadow AI UK business risk extends beyond productivity — it creates exposure under multiple regulatory frameworks at once.

UK GDPR requires organisations to demonstrate control over personal data processing. When an employee pastes customer records, contract clauses, or financial data into an unapproved AI tool, that control is gone. Often nobody even knows it happened.

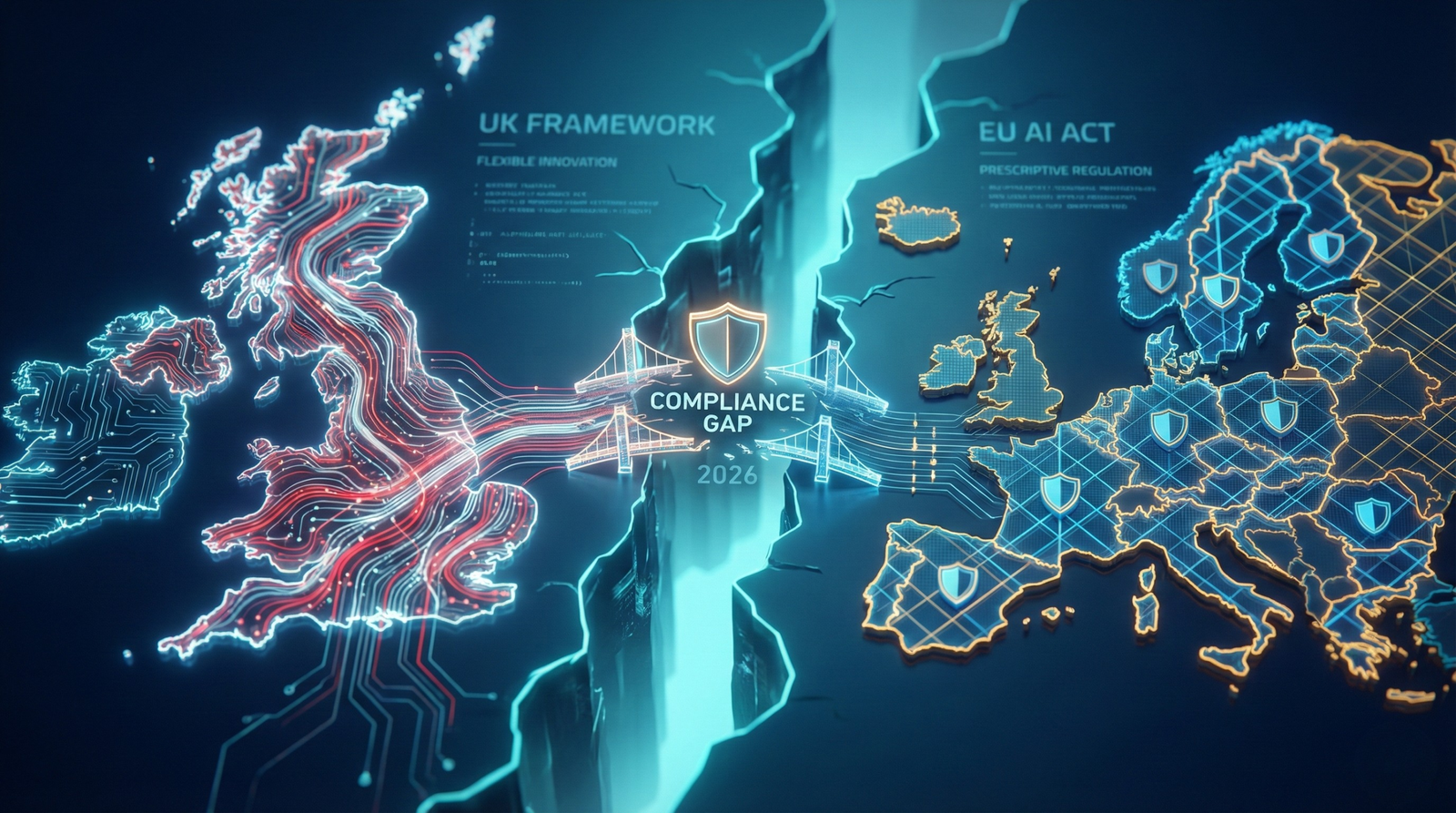

The growing compliance gap between UK and EU AI regulation makes this worse. Any UK business operating in — or selling to — the EU market faces the EU AI Act’s extraterritorial requirements, with full high-risk system obligations kicking in from August 2026. Shadow AI deployments are undocumented, unclassified, and ungoverned by definition. They cannot meet those requirements.

The ICO published its first report on agentic AI in January 2026, explicitly addressing data protection and privacy risks from autonomous AI systems. The regulator confirmed active monitoring of AI deployments throughout 2026. For businesses running ungoverned tools, that monitoring represents direct enforcement risk — not a future possibility.

Meanwhile, the Trustmarque AI Governance Index 2025 found only 7% of UK businesses have fully embedded AI governance frameworks. Fifty-four per cent have minimal governance or none at all. The regulatory bar is rising. Most organisations are structurally unprepared to clear it.

What Shadow AI Actually Costs

Beyond compliance, shadow AI imposes costs that most boards aren’t tracking.

Studio Graphene surveyed 500 UK senior decision-makers in March 2026. The headline: 78% of businesses now use AI in some capacity, but only 31% report a positive return on investment. Eighteen per cent said their AI projects outright failed. And only 41% of AI-using organisations can even define what success looks like. That last number is the one that should worry boards most.

Shadow AI contributes directly to this ROI failure. When adoption is fragmented, uncoordinated, and invisible to leadership, you can’t measure what you’re spending, what you’re getting, or whether any of it’s working. Board frustration builds. Budgets get questioned. The businesses that cut spend prematurely lose ground to competitors who invested in governance first.

The SAP/Oxford Economics report calculated that the average UK business generates roughly £2.7 million in returns from AI — but only where strategy, governance, and training are aligned. Without that alignment, shadow AI UK business costs become an expensive, invisible liability sitting on your balance sheet.

How to Close the Shadow AI Gap

Closing the shadow AI UK business gap doesn’t mean banning AI or locking down employee access. That approach fails for the same reason prohibition always fails — demand doesn’t vanish because you refuse to meet it.

Start with visibility. Most IT teams have no idea which AI tools employees are actually using. Run a non-punitive audit — a straightforward survey across every department. Ask what tools people have adopted, what data they’re putting into them, and what problems they’re solving. The answers will reveal both the risk and the unmet need driving it.

Then give people something approved to use. Employees turn to shadow AI because nobody offered them an alternative. Define a short list of sanctioned tools — three to five, maximum — that meet your data residency, security, and compliance requirements. Make access frictionless. If the approved option is harder to reach than ChatGPT, people will keep using ChatGPT. That’s not defiance. It’s human nature.

Finally, train people on guardrails — not just on the tools themselves. SAP’s research found 60% of UK businesses haven’t given employees comprehensive AI training. Effective training covers what data categories are off-limits, what outputs require human review, and what the organisation’s AI use policy actually says. Role-specific training outperforms generic modules every time. A finance team’s guardrails look nothing like a marketing team’s.

Tools Worth Considering

The most practical first step for most UK businesses is deploying a workflow automation platform that centralises AI usage, enforces data handling rules, and gives you audit visibility you currently don’t have.

Our comparison of the leading UK-compliant workflow tools covers Make.com and Zapier — the two most widely adopted options for UK businesses — with UK GDPR compliance, GBP pricing, and integration depth assessed side by side. Both platforms let you build sanctioned AI workflows that replace the ad hoc, ungoverned usage driving shadow AI risk in the first place.

For businesses needing enterprise-grade AI governance, tools like Microsoft Purview, Securiti, and IBM watsonx.governance offer deeper visibility into AI usage patterns, data classification, and compliance reporting. They sit at a higher price point — better suited to mid-market and enterprise organisations with dedicated IT resource.

The goal isn’t to eliminate AI from your workplace. For any shadow AI UK business problem, the fix is making governed AI the path of least resistance.

FAQ

What is shadow AI?

Shadow AI is any AI tool used by employees for work without formal approval or oversight from the organisation’s IT or compliance function. That includes free consumer tools like ChatGPT, Google Gemini, and smaller generative AI apps used to draft content, analyse data, or automate tasks without anyone in IT knowing about it.

Is shadow AI illegal in the UK?

Not in itself. But using unapproved AI tools to process personal data, customer records, or commercially sensitive information can breach the UK GDPR, the Data Protection Act 2018, or sector-specific regulations enforced by the FCA or ICO. The organisation — not the individual employee — carries the liability.

How common is shadow AI in UK businesses?

Shadow AI UK business usage is widespread. SAP and Oxford Economics research from February 2026 found 68% of UK organisations report employees using unapproved AI tools at least occasionally. Microsoft UK’s March 2026 research found 62% of UK businesses have deployed AI agents, many without full governance visibility over what those agents are doing.

How do I create an AI use policy for my business?

Start simple. Define which AI tools are approved. Specify what data categories must never go into any AI tool. Require human review of all AI-generated outputs used in external communications. Set quarterly review cycles to update the policy as tools and regulations change. You don’t need a 50-page document — you need a clear, enforceable framework your teams will actually follow.

If your team is already using AI tools you didn’t approve, start with our breakdown of the best UK-compliant automation platforms — and build your approved stack before the ICO comes asking questions.