AI recruitment tools UK employers are deploying at pace — but the ICO’s March 2026 report made clear that speed without safeguards is a compliance risk. Direct letters to 16 named organisations and a live consultation open until 29 May 2026 signal that enforcement follows next. Here is what the rules now require.

Table of Contents

The Short Version

The ICO’s position on AI recruitment tools UK employers are currently using is direct: most are non-compliant. After reviewing evidence from more than 30 UK employers, the ICO found that most use AI to screen and score candidates in ways that constitute automated decision-making under UK data protection law — and most are not applying the required safeguards. Many employers don’t even acknowledge that automated decision-making is occurring.

The Data (Use and Access) Act 2025, in force from 5 February 2026, updated the framework — but the ICO’s expectations tightened alongside it. Compliance is not optional, and the window for getting ahead of enforcement is narrowing.

What the ICO Found — and Why It Matters Now

On 31 March 2026, the ICO published a recruitment ADM report drawing on evidence from over 30 UK employers, accompanied by draft guidance and letters to 16 named organisations that have since committed to act. The central finding: many employers told the ICO their AI tools were used only for decision support, with a human making the final call. In practice, the evidence showed something different — tools making substantive decisions, and human review that amounted to rubber-stamping.

The ICO defines meaningful human involvement precisely for any AI recruitment tools UK employers deploy. The reviewer must have the authority, discretion, and competence to change the outcome before it takes effect. Scanning an AI-generated shortlist and clicking approve does not meet that bar. The person must be capable of overriding the result — and in practice, must sometimes do so.

This failure pattern is the same one identified across UK business more broadly. Shadow AI governance gap in UK businesses — tools adopted informally, without governance, generating decisions nobody in the organisation has formally acknowledged as automated. In recruitment, that creates specific and enforceable liability under UK GDPR.

What Changed Under the Data (Use and Access) Act 2025

The DUAA replaced the old Article 22 prohibition with Article 22A UK GDPR. The change is real: instead of banning solely automated decision-making outright, the new framework creates a right of challenge with mandatory safeguards. Employers can now rely on legitimate interests as a lawful basis for recruitment ADM — previously restricted to consent or contractual necessity. That’s useful.

But the flexibility is conditional. For AI recruitment tools UK businesses are procuring, two compliance paths exist under the new framework. Accept that ADM is occurring, acknowledge it, and implement the required safeguards — or redesign processes so a human plays a genuine role in each decision for each candidate. Given typical UK hiring volumes, the first path is more realistic. That means the safeguard requirements become mandatory, not optional.

One area DUAA didn’t fix: where special category data is involved — disability, health status, ethnicity, characteristics AI tools frequently infer from CV content or communication patterns — the stricter pre-DUAA rules apply in full. That’s not a grey area. It’s a hard line.

The UK and EU Regulatory Landscape for AI Recruitment Tools

The ICO’s framework governs domestic obligations. For UK employers with EU operations, a second layer applies. The EU AI Act classifies recruitment, candidate selection, and evaluation tools as high-risk AI systems under Annex III — triggering conformity assessments, technical documentation, and human oversight obligations that go beyond what the DUAA requires. Our analysis of UK and EU AI compliance obligations for employers covers where the two frameworks diverge and what it means for UK businesses hiring across both jurisdictions.

The Equality Act 2010 runs alongside both regimes independently, and applies to every set of AI recruitment tools UK employers procure regardless of vendor origin.. Employer liability for AI-driven indirect discrimination is absolute. “The algorithm produced the shortlist” is not a defence in an Employment Tribunal. Bias testing is the employer’s obligation — not a question to outsource to the vendor.

What UK Employers Must Do Now

The ICO’s requirements apply now, under existing law as reinterpreted through the DUAA framework. Four obligations are non-negotiable for any employer using AI in hiring.

Complete a Data Protection Impact Assessment before procuring or deploying any AI hiring tool — ideally at the procurement stage, before signing anything. The DPIA must assess privacy risks and specifically examine whether the vendor uses candidate data to train, test, or maintain the AI model. The ICO found this repurposing — often without candidate knowledge — is where the majority of compliance failures originate.

Be transparent with candidates. Privacy notices must clearly state that ADM is being used, explain its logic, and describe its likely consequences. A single line buried in a general privacy policy won’t pass scrutiny.

Provide a genuine right to challenge. Candidates must be told how to request human review of an automated decision, and that process must function. A form that goes nowhere is not a process.

Run documented bias monitoring. Monthly bias reviews covering protected characteristics under the Equality Act are cited by the ICO as good practice. Ask vendors about their own bias testing during procurement — and get the answers in writing, in the contract.

The employer compliance picture in 2026 extends beyond the ICO. Employment Rights Act 2025 obligations for UK employers introduced new hiring risk from July 2026 alongside the ICO’s March guidance — the same HR and legal teams need to manage both simultaneously.

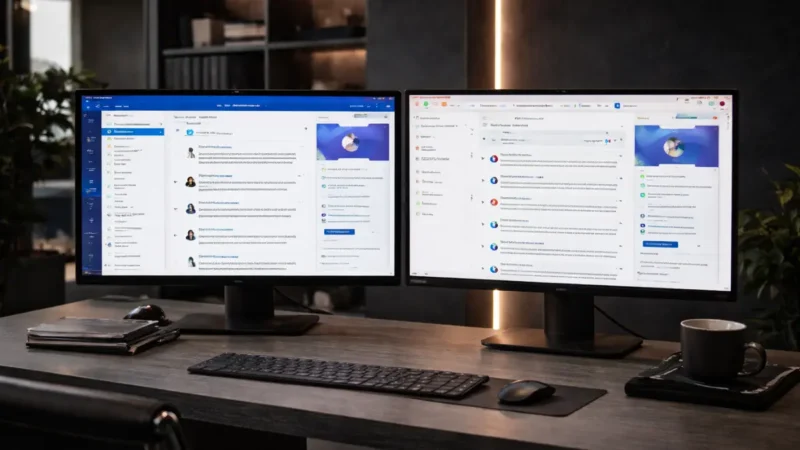

AI Recruitment Tools UK Employers Should Evaluate in 2026

Choosing AI recruitment tools UK employers can use compliantly means asking three questions before any commercial decision: how does the tool handle candidate data after submission; how does it enable genuine human involvement at each hiring stage; and what bias testing documentation can the vendor provide?

Zoho Recruit is the most accessible compliant option for UK SMEs. AI resume screening, candidate scoring, and automated workflows are all configurable — human approval steps can be enforced before any shortlisting decision takes effect. A free plan is available for small teams; paid plans from approximately £25 per user per month (verify current GBP pricing on Zoho’s UK page before committing). Zoho’s EU data centres support UK GDPR data residency, and the platform integrates with Zoho Books and HR if you are already in that ecosystem. Visit Zoho Recruit →

Workable suits teams hiring at mid-market volume. Its AI screening assistant scores candidates and surfaces match explanations, but the platform is built around structured scorecards and approval workflows — human decision points are baked into the default process, not bolted on. Check Workable’s UK site for current GBP pricing and local support terms before committing.

Manatal is worth evaluating for smaller UK teams hiring at modest volumes. AI candidate recommendations and resume parsing are solid at entry-level pricing; the platform is used across UK and EU markets and handles standard GDPR data residency requirements.

For any of these: request the vendor’s data processing agreement and ask specifically whether candidate data is used for AI model training. The ICO’s March 2026 report shows this is the question most employers aren’t asking — and the one that creates the most significant compliance exposure.

Our comparison of best HR software for UK SMEs covers broader HR platforms where AI recruitment features are bundled alongside ERA 2025 compliance tools, payroll integration, and absence management.

FAQ

What is automated decision-making in recruitment under UK law?

ADM in recruitment means a decision about a candidate is made solely by automated processing — with no meaningful human involvement — that has a legal or similarly significant effect. Under Article 22A UK GDPR as amended by the Data (Use and Access) Act 2025, candidates have the right to challenge such decisions and request human review. Employers must disclose when ADM is in use and explain how it works.

Does the ICO’s March 2026 guidance create new legal obligations?

No. The report and draft guidance consolidate existing UK GDPR obligations as they apply to AI recruitment tools — no new legislation was introduced. The consultation is open until 29 May 2026; employers and HR professionals can respond via the ICO’s website. Final guidance will follow after that date.

What counts as meaningful human involvement under ICO rules?

The reviewer must have the authority, discretion, and competence to change the outcome of an automated decision before it takes effect. Approving an AI-generated shortlist without genuine capacity to override it doesn’t qualify. The ICO is explicit: rubber-stamping is not meaningful involvement.

What should UK employers ask AI recruitment vendors before procurement?

When evaluating AI recruitment tools UK vendors supply, four questions matter: whether candidate data is used to train or maintain the AI model; what bias testing the tool undergoes and how often; what data residency options are available for UK GDPR compliance; and whether the tool can be configured to enforce human approval at each decision stage. The answers should be in the contract — not just the sales conversation.

For broader analysis of how AI regulation is reshaping UK business operations in 2026, explore the ObvioTech AI & Automation section.